We’ve already seen products that turn the bare human hand into a virtual controller, using cameras to identify your hand and finger movements, which it then translates into digital commands. The Google Project Soli wants to take things to the next level, using radar technology to enable new types of touch-free interaction.

Designed to turn the human hand into “a natural, intuitive interface” for controlling electronic devices, it claims to be able to track high-speed, sub-millimeter motions at a high level of accuracy. That means, it can recognize well beyond large swipes and taps, allowing it to be programmed to respond to minute hand movements, paving the way for a whole load of control possibilities. Want to turn a virtual knob, adjust a virtual slider, or play air guitar? This thing should make it possible.

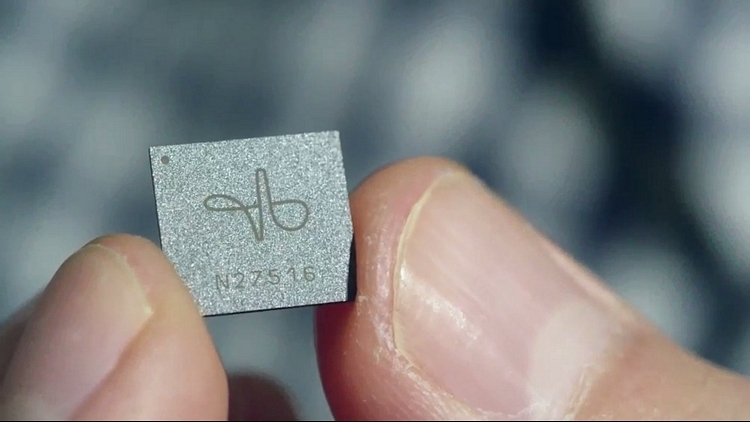

The Google Project Soli consists of a gesture radar sensor that’s been condensed into a chip measuring 5 x 5 mm, making it small enough to fit inside the current generation of wearables. The sensor uses a broad radar beam to cover the whole hand, estimating the hand position by analyzing the changes in the signal over time. Running at 60GHz, it can capture motion at speeds of up to 10,000 frames per second, allowing it to recognize and respond to movement without much delay.

As of now, the first prototype chip is finished, although they’re finalizing the prototype development board to use with it. Once that’s done, they plan to release a software API that developers can use to integrate the tech into their own projects.

Hit the link below to learn more.