It may look like any box you see lining up the shelves in the server room at work, but there’s nothing ordinary about the Nvidia DGX-1. Housed inside that enclosure is a GPU-accelerated supercomputer that delivers the throughput equivalent of 250 x86-based CPU servers.

Designed to handle the processing requirements of deep learning and artificial intelligence, the system boasts up to 170 teraflops of half-precision at peak performance, allowing it to train deep neural networks at a rate 12 times faster than Nvidia’s Maxwell architecture from just last year. That means, whether you’re on working on self-driving cars, autonomous drones, or your very own version of Skynet, this thing should help your AI systems realize their potential like no other off-the-shelf server box can.

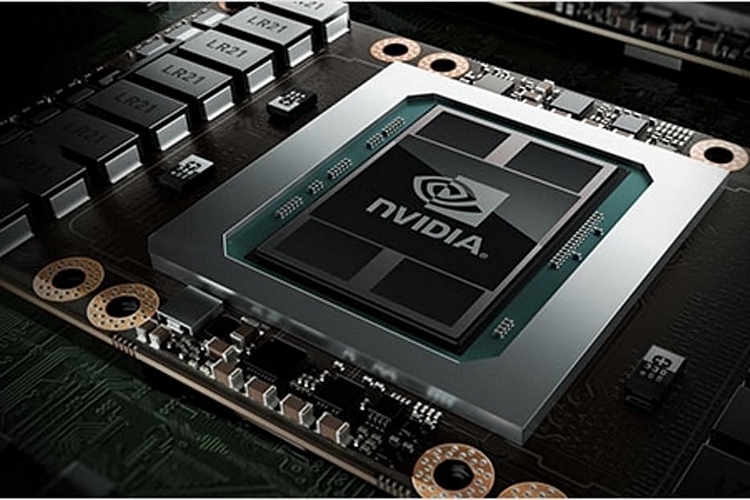

The Nvidia DGX-1 is a turnkey system that combines eight 16GB Tesla P100 GPUs, a pair of Intel Xeon CPUs, 512GB of RAM, four 1.92TB SSDs, dual 10GbE Quad InfiniBand 100Gb networking, and an NVLink Hybrid Cube Mesh, all cobbled up together in a single box. Each one comes preloaded with a suite of software optimized for deep learning, including the NVIDIA DIGITS (a deep learning training system), the NVIDIA CUDA (a library of primitives for building deep neural networks), and optimized versions of deep learning frameworks, such as Caffe, Theano and Torch. As you can imagine, this is no lightweight box, tipping the scales at a hefty 132 pounds.

No release date has been given, but the Nvidia DGX-1 is priced at $129,000.